BACKGROUND AND AIMS

AI has shown promise in medical education,1-3 yet its ability to predict residency application outcomes in dermatology has not been studied. The authors sought to evaluate whether a deep neural network could accurately predict interview offers in dermatology using multivariable applicant and program data.

MATERIALS AND METHODS

The authors developed a dual tower neural network in Python (Python Software Foundation, Beaverton, Oregon, USA) using dermatology application data from the Texas STAR database (n=74,534 applications; 943 applicants; 144 programs). One tower encoded applicant-level features, including Step 2 Clinical Knowledge (CK) scores, research productivity, geographic factors, and signaling behavior. The second tower encoded program-level features, including institutional ranking and program size. Model performance was assessed by comparing predicted interview offer rates across several key applicant and program variables.4

RESULTS

Away rotations were the strongest predictor of interview offers (86.1% offer rate versus 10.6% without). Program signaling was also highly predictive (49.2% versus 12.5%; Figure 1). Step 2 CK scores between 270–274 were associated with the highest interview rates (31.9%), while scores lower than 250 corresponded to rates under 11.6%. Geographic differences were also notable, as applicants from the New England region had the highest interview rate at 17.3% compared to the lowest 9.9% rate among applicants from the Pacific Northwest. Collectively, these findings demonstrate that both applicant behaviors and regional/program context contribute meaningfully to interview outcomes.

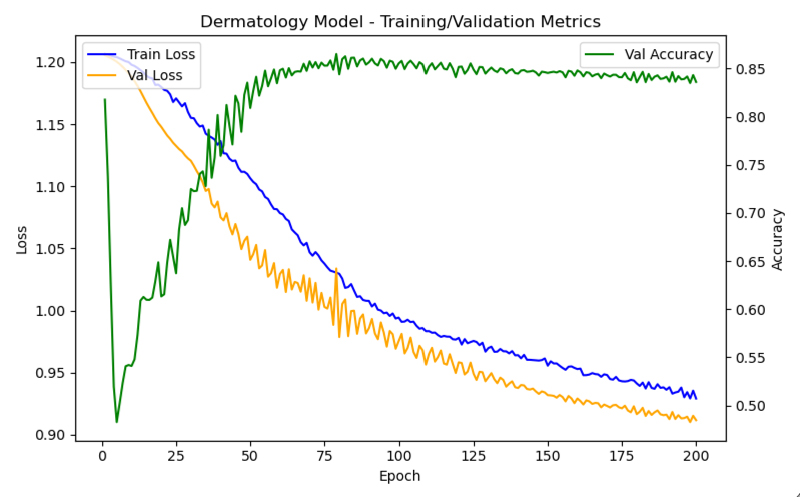

Figure 1: Dermatology model training and validation metrics across epochs.

Val: validation.

CONCLUSION

A dual tower neural network trained on a large, multi-institutional dataset demonstrated strong predictive performance for dermatology interview offers. The model identified variables such as away rotations, signaling, Step 2 CK score range, and geographic origin as significant determinants of interview rates. These findings suggest that AI-based tools have the potential to serve as a valuable resource for data-driven advising in the dermatology residency match process. Importantly, this model is intended to augment, not replace, holistic applicant review by mentors and programs. Because Texas STAR data is self-reported and historically derived, unmeasured confounding and reporting bias may affect model estimates. External validation across future match cycles and independent datasets is needed to confirm generalizability and assess equity across applicant subgroups. If prospectively validated, this framework could support personalized advising, strategic signaling decisions, and more transparent applicant-program alignment.5,6