Abstract

Background: Healthcare organisations are experiencing significant challenges and delays in radiological reporting and clinical assessment. AI tools can assist the interpretation of chest X-rays and enable risk stratification.

Objective: To assess patient perspectives on the use of AI in chest X-ray interpretation, focusing on their knowledge, comfort levels, and preferences regarding AI-assisted radiological care.

Methods: A patient survey on AI use in chest X-ray interpretation was conducted at a single UK tertiary hospital. Patients attending their chest X-ray appointment were invited to participate. The survey captured information on their knowledge and perceptions of AI use in the chest X-ray service. Responses were summarised using descriptive statistics. Free-text comments were interpreted using thematic analysis. Correlation analysis assessed relationships between responses.

Results: Of the 175 patients who participated in the study, 149 completed the survey. Overall, 52.1% reported being comfortable with the use of AI in their care, 24.9% were neutral, while 21.9% were uncertain. Only two participants objected to the use of AI in their care. Most (92.6%) would prefer a chest X-ray AI tool to be used as a decision aid, rather than reporting autonomously (4.9%) or not being used at all (2.5%). Patients with higher self-reported knowledge of AI were more likely to be comfortable with the use of AI in their care (p=0.003; τb=0.24). The open-ended responses showed that participants generally accepted the AI’s ability to improve care; however, many indicated that further information was needed.

Conclusion: Patients who self-reported greater knowledge of AI were more likely to accept its use in chest X-ray reporting and prioritisation. This finding highlights the importance of improving public understanding through clear communication and education.

Key Points

1. Understanding patient views can support the successful integration of AI into clinical workflows. This study surveyed 175 patients attending chest X-ray appointments to assess their views regarding AI being used in their care.2. This study highlights that most patients surveyed supported AI use in chest X-ray reporting, especially when used alongside human expertise. They also approved of AI use for triage to prioritise patients who need urgent clinical evaluation.

3. Findings showed that acceptance of AI use was correlated with patients’ self-reported knowledge of AI. Clear communication and educational efforts can enhance patients’ understanding and comfort with the use of AI in their care.

INTRODUCTION

With the ever-increasing need to improve efficiency and efficacy in clinical care, AI has been drawn to the forefront of healthcare, especially in medical imaging.1 In the year 2023/2024, 47.2 million images required processing within NHS England.2 Of these, general practitioner referrals for chest radiographs accounted for approximately 2.2 million images.2 A small minority of these images will contain indications of serious disease, most notably lung cancer, which requires urgent reporting and further action. Until recently, there were no objective means of risk stratifying large image sets, which would enable clinical staff to report the images of most concern first. AI techniques could assist by prioritising radiographic images for clinician review and identifying areas of suspicion.

Radiology reporting is facing significant challenges. Understaffing and increased demand have led to longer waiting times for radiological review,3 while subsequent outsourcing has resulted in high costs.4 The UK has a 29% shortfall of clinical radiologists, which is estimated to increase to 39% by 2029.4 In 2022/2023, over 700,000 imaging investigations performed in NHS England were not reported within the target of 28 days, a 31% increase from the previous year.3 The cost of managing excess reporting demand in the UK in 2024 was estimated at 325 million GBP.4 AI may help alleviate this burden and enhance service delivery by reducing reporting time and prioritising images of high-risk patients. This could reduce time to diagnosis and treatment for a range of conditions, including lung cancer and other critical illnesses, improving patient outcomes.

Studies of clinician perceptions on the implementation of AI in clinical settings suggest an optimistic view; however, concerns include the quality and safety of AI systems.5 In recent years, the importance of considering patients’ views on AI used in their care has been recognised.6,7 Findings from surveys suggest that patients would like to know how AI is incorporated into their care, while concerns include lack of human supervision, data privacy, and accountability.6 One of the main underpinnings of medicine is informed consent and the establishment of duty of care. With the introduction of AI, careful consideration of confidentiality and informed consent is required.8

A survey of patient perceptions of the use of AI in the assessment of their radiographs was undertaken. The principal goal of this survey was to provide evidence for future decisions on the implementation of AI into the healthcare system, ensuring that care is tailored to patient needs.

METHODS

Context

The Grampian Radiology Assisted Chest X-Ray Evaluation (GRACE) project prospectively evaluated AI use for chest X-rays in NHS Grampian, UK. This prospective project evaluated Annalise.ai (now Harrison.ai, Sydney, Australia)’s AI tool Annalise Enterprise CXR (now Harrison.ai chest X-ray), capable of identifying 124 clinical findings.9 The primary evaluation period covered chest X-rays conducted between May 2023–April 2024. The AI tool was fully integrated into the chest X-ray workstream, reading all images and sorting them according to risk. The highest risk images were those identified by the AI as possible lung cancers. These were urgently reported by a small number of radiologists.

The NHS Grampian evaluation, assessing Annalise CXR as a reporting triage, clinical decision support, and education tool, included a 12‑month run‑in period before the primary evaluation period. This cross-sectional, single-centre survey was conducted during this run-in period, assessing patients’ views on AI use for chest X-rays during early implementation of the AI tool.

Survey Administration

Following research governance consultation, it was advised that the survey could meet the criteria for a service evaluation. GRACE, which assessed the AI tool itself, had previously been registered with NHS Grampian as a service evaluation. This patient‑perspective survey was registered under the same pathway. The survey collected anonymous information from patients who were attending their chest X-ray appointments at the Outpatient Radiology Clinic, Aberdeen Royal Infirmary, UK (July–September 2022). No NHS data was collected. Patients were approached and invited to participate during their outpatient radiology clinic visit.

A thorough review of previous research questionnaires that had evaluated patient perceptions of AI in healthcare was conducted to identify the diverse approaches utilised. Based on this review, a self-administered questionnaire was co-designed with clinical, patient, and public involvement (Aberdeen Centre for Health Data Science, School of Medicine, Medical Sciences and Nutrition, UK) and academic input to enable a survey of patient perceptions on the use of AI in radiology in relation to chest X-ray. Closed questions were utilised along with open-ended questions to enable participants to provide further information.

The questionnaire used for this study (Supplementary Figure 1) was administered in paper format and in English Language only. The survey included a brief introductory statement offering participants a general overview of the purpose of the service evaluation. The range of information captured included patient demographics (sex, age, and educational background), self-perceived knowledge of AI, and patient views on the use of AI in chest X-ray. Completion of the questionnaire was taken as consent to participate. A secure box was provided for submission of completed questionnaires within the radiology department to ensure anonymity of participant responses.

Data Handling and Statistical Analysis

Following completion of the questionnaire administration process, the responses obtained were manually entered into the SPSS program (Version 27; IBM, Armonk, New York, USA). Data entry was reviewed for quality control purposes, ensuring elimination and correction of errors. Statistical analyses were performed in R (version 4.4.2). Demographic information, including age, sex, and educational background, were summarised using descriptive statistics. Frequency tables and bar charts were used to describe the responses to each question. Responses obtained for the open-ended questions were manually screened using a thematic analysis framework with corresponding quotes identified.

Using a 95% CI, τb correlation analysis was used to investigate the relationship between 1) patients’ self-reported level of AI knowledge and their overall acceptance of AI in their care; and 2) self-reported AI knowledge and approval of the tool’s triaging ability (determining the order in which chest X-rays are reviewed). τb was selected because it is appropriate for ordinal data with ties. The assumptions for τb (ordinal measurement, independence of observations, and an approximately monotonic association) were considered to be met given the study design. ‘I do not know’ responses were excluded from the correlation analyses. The 95% CIs were calculated using bootstrapping with 5,000 repetitions.

Nonresponse error could not be formally assessed, as no information was collected on individuals who declined to participate. Statistical significance was defined as p<0.05. Because this was a service evaluation with no prespecified hypotheses, the correlation analyses were considered exploratory, and no formal sample size calculation was performed.

The reporting of the study aligns with the Consensus-Based Checklist for Reporting of Survey Studies (CROSS) guidelines.10

RESULTS

In total, 175 patients participated with most surveys (n=149; 85.1%) completed in full. For eight of the 26 partially completed surveys, one or more answers were missing sequentially at the end of the survey. For the remaining 18, missing answers were interspersed throughout the survey. One participant required assistance in completing the survey due to a language barrier.

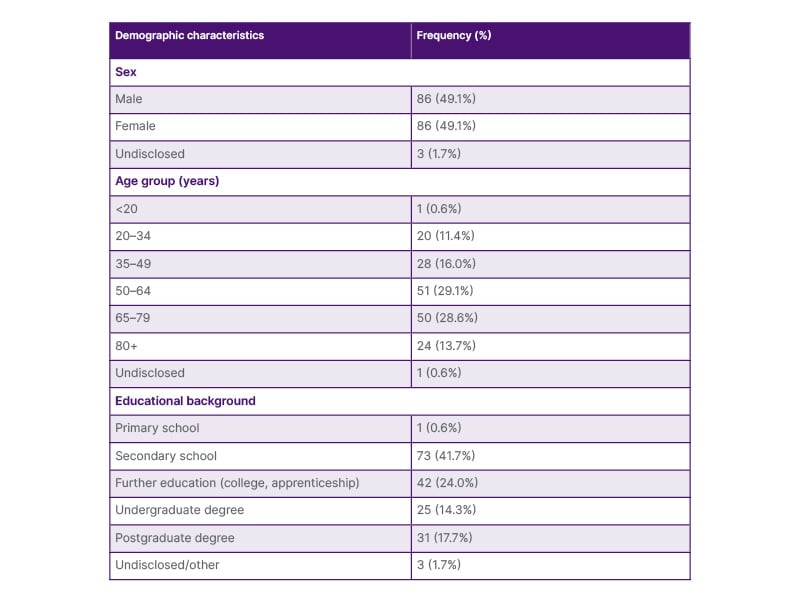

Demographic characteristics are shown in Table 1. An equal proportion of men and women completed the survey. Age was normally distributed (mean: 59.1 years; SD: 17.7 years) and ranged from 16–95 years. Most respondents (41.7%) had secondary education as their highest attained education level. There were no significant differences in sex (χ2; p=0.37) or age (two-sample t-test; p=0.21) between participants who fully or partially completed the survey.

Table 1: Demographic characteristics of survey participants.

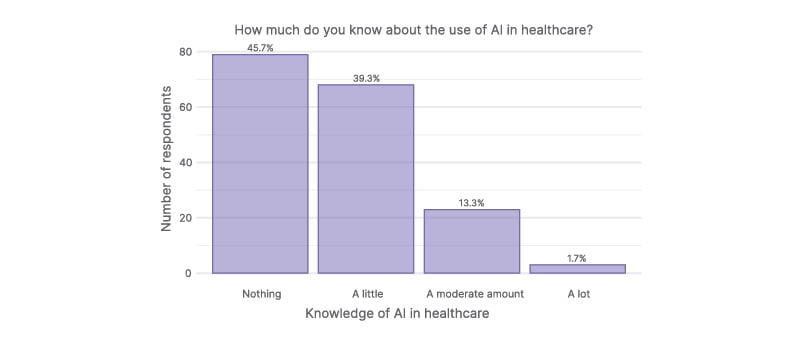

Most participants (45.7%; 79/173) indicated that they knew nothing about the use of AI in healthcare, while 39.3% (68/173) said they knew a little (Figure 1).

Figure 1: Participant self‑reported knowledge of AI in healthcare.

Most participants reported knowing little to nothing about AI in healthcare. Two participants did not provide an answer.

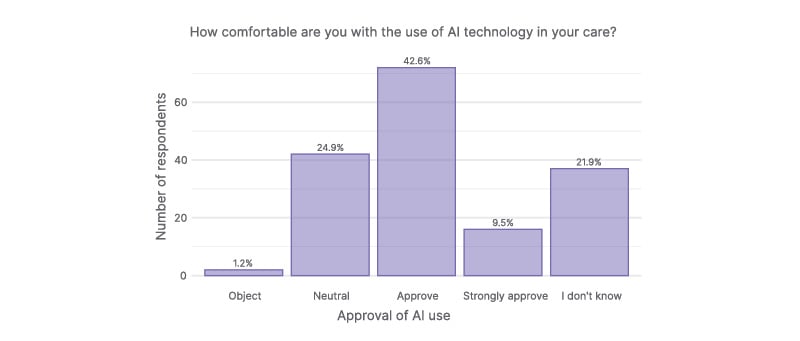

Participant attitudes towards the use of AI in their care were also assessed. Overall, 52.1% (88/169) of participants either approved or strongly approved of AI integration in their healthcare. A further 21.9% (37/169) expressed uncertainty (Figure 2). Correlational analysis showed that participants with greater self-perceived knowledge of AI were more likely to be comfortable with the use of AI in their care (p=0.003; τb=0.24; 95% CI: 0.08–0.38). Notably, only two participants objected to the use of AI in their care; both of whom reported having no or limited knowledge of AI.

Figure 2: Participant attitudes toward AI use in their healthcare.

While most participants were comfortable with the use of AI in their care, over one-fifth were unsure. Six participants did not provide an answer.

The subsequent open-ended question, ‘In a few words, can you tell us why?’, was answered by 77 participants. Two main thematic areas were identified: 1) approval and 2) lack of knowledge. Most comments (58.4%; n=45) indicated approval of AI for chest X-rays. Participants cited the prospect of improved care (‘AI aids & speeds up diagnostics’, ‘will improve diagnosis’, ‘advancement in care of patients’, ‘may improve & speed up interpretation of tests’); the reduction of human error (‘not affected by the stresses humans have’); and that it can help support healthcare (‘help with human care’, ‘anything that can help doctors help us can surely only be good’, ‘all information gained can be helpful’). Secondly, 25 comments (32.5%) related to a lack of knowledge, with one representative comment stating: ‘Don’t know enough to have an opinion!’. Only 5 comments (6.5%) indicated a cautious or negative view (‘technology still in infancy’, ‘it depends how much AI is used’).

Participants were further asked about how AI should be used to review their X-ray. Most (92.6%; 151/163) would prefer AI to be used as a decision aid. Only four participants (2.5%) thought AI should not be used at all, while eight participants (4.9%) thought AI should be used alone (without human input).

If this AI tool for chest X-rays were to be used in routine clinical care, 25.0% of participants (41/164) indicated that they would like the opportunity to opt out; 45.1% (74/164) would not require this option, while 29.3% (48/164) responded that they did not know.

When participants were asked about their potential concerns, most (61.8%; 99/160) indicated that the AI tool may make mistakes, while 30% (48/160) had privacy and/or security concerns about their personal data. Several (28.1%; 45/160) expressed no concerns. Five respondents highlighted other concerns in the free-text section, with four relating to potential negative impacts on care. In one open-ended response, the participant stated that they were concerned about the threat to personalised care, saying that AI ‘may undermine the care element of treatment by being impersonal’. Another indicated that AI could ‘make decisions based on cost not on chances of survival’.

Most participants (57.9%; 95/164) indicated that they thought that the AI tool would improve their care. Only one participant thought the tool would worsen care, while 34.1% (56/164) stated that they did not know how it would impact their care. When asked if they thought their doctors may become reliant on the tool instead of their medical expertise, 30.1% (49/163) answered ‘no’, while approximately half (50.9%; 83/163) answered ‘maybe’. Only 3.7% (6/163) participants indicated ‘yes’. The remaining 15.3% of patients (25/163) responded that they did not know.

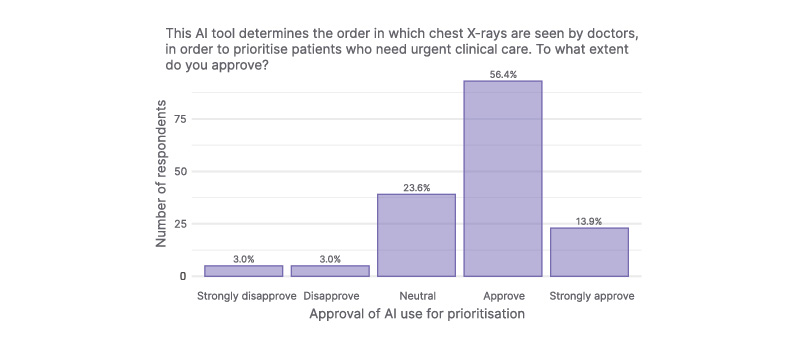

In total, 116 (70.3%) participants approved of the prioritisation functionality of the chest X-ray AI tool (Figure 3). A total of 39 participants (23.6%) were neutral to this feature, while 10 (6.0%) participants disapproved or strongly disapproved of it. Further correlational analysis showed that respondents with greater self-perceived knowledge of AI were also more likely to approve of the tool’s prioritising functionality (p=0.011; τb=0.18; 95% CI: 0.04–0.31).

Figure 3: Participant attitudes toward AI use in their healthcare.

Most participants approved of the AI tool’s risk stratification feature. Ten participants did not provide an answer.

There were 41 free-text responses to the final survey question asking participants whether they had any further comments. The three main thematic areas identified were: 1) approval; 2) need for additional information; and 3) AI to be used to support and not replace human decision making. Overall, 15 comments (36.6%) were positive (‘If it helps why not’, ‘great idea’, ‘should be used more’), highlighting that AI could reduce waiting lists and support doctors (‘can speed things up & take pressure off doctors. Great idea’). A further 10 comments highlighted a knowledge gap and a need for further information (‘All too new for me’, ‘I would like to know more about it’). Eight comments highlighted that AI should be used in combination with human expertise, not replace it (‘Could be an incredible tool, but a human should always be involved in any final decisions affecting patient care’), while five were negative/cautious (‘AI is dangerous’, ‘machines can make mistakes/errors too’).

DISCUSSION

The service evaluation demonstrated support for the use of AI as a decision aid for chest X-ray reporting and prioritisation, indicating a positive reception toward AI-assisted diagnostic workflows. Most participants showed optimism and confidence in the prospect of AI use in the routine delivery of chest X-ray, with very few respondents objecting. Overall, they preferred the tool to be employed as a decision aid augmenting the clinician’s role. Participants wanted more information on how the tool works and how it would be implemented in their care. The survey highlights the need to actively involve and inform patients about new developments in healthcare pathways to improve acceptability to patients.

This study is an important addition to the literature that focuses on patients’ views on the use of AI in healthcare.6,7 Importantly, it highlights that patients generally approve of the use of AI, with many believing that it may improve the care they receive, consistent with prior reports.11-13 However, most participants indicated they knew little to nothing about AI, and many highlighted that they were unsure how they felt about its use in their healthcare. With higher self-reported knowledge of AI linked to increased acceptance, these findings suggest a need to provide further information to patients regarding AI tools used in their care.

While some studies have explored patient perspectives on AI use in radiology,14 there is a lack of focused research investigating the views of patients on the use of AI in chest radiography. This study provides preliminary insights into patients’ level of support for the integration of AI for chest X-rays. Additionally, the survey offered participants a free-text section enabling them to express more information about their opinions of using AI in chest X-ray.

The main limitation of this study is the limited generalisability of the findings beyond the local context, as only NHS Grampian patients were included. No specific arrangements were made for language barriers, as English is the primary language of communication in the setting of interest. According to Scotland’s census in 2022, 93% of Aberdeen City residents and 94% of Aberdeenshire residents fell into the category ‘Speaks, reads and writes English’, indicating few would have been excluded.15 One participant used a personal mobile app to translate the questionnaire into their preferred language, which may have introduced inaccuracies and led to inconsistent interpretation of the questions. This response could not be removed as responses were anonymous and non-identifiable. In this study, participants were asked to self-rate their knowledge of AI, which is subjective. Participants were offered a brief introduction to the AI tool on the first page of the questionnaire, which sought to standardise participants’ level of knowledge. There are also potential sources of bias. Selection bias may have occurred if individuals with stronger views or greater interest in technology were more likely to participate. Although efforts were made to frame questions neutrally, social desirability bias and aspects of the questionnaire design may still have influenced responses.

Most participants believed that the use of an AI tool in their care was advantageous, but preferred it to be used alongside human decision making and review. A similar conclusion was drawn from a systematic review of anticipated patient challenges with the introduction of AI into healthcare.16 The AI tool being assessed in this study was designed as a decision aid, meaning that decision making about the X-ray findings is the responsibility of the radiologist reading the image and not that of the AI tool. The AI tool is currently being used to prioritise images for radiologist review, giving it an independent function. Despite expressing less support for the autonomous use of AI, most respondents approved of AI use for prioritisation.

In conclusion, this study revealed that few respondents objected to the use of AI technology in the delivery of chest X-ray investigations and that support was greater in those with more knowledge of AI. This patient feedback shows a promising prospect for the use of AI in chest X-ray both for prioritisation of cases and as a decision aid. The findings also draw attention to the need for increased education regarding the use of AI in healthcare.