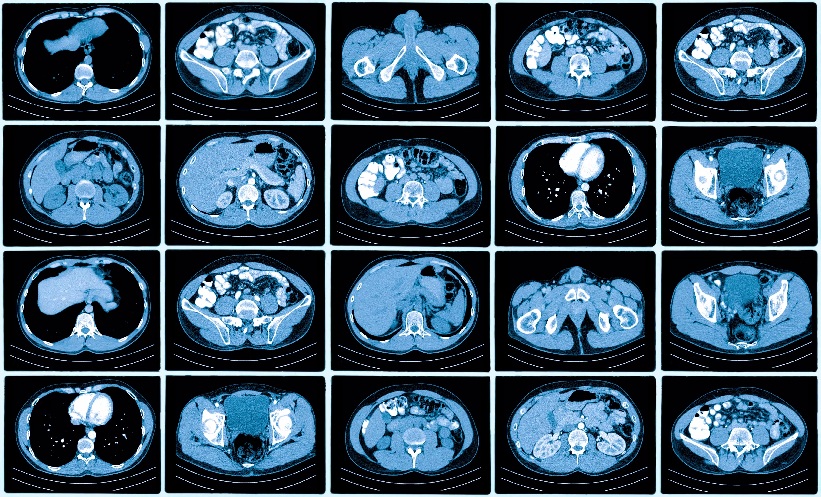

ARTIFICIAL IMAGING (AI) has been widely implemented into the process of medical imaging to enhance both the precision and reliability of diagnosis, and the treatment of various diseases. However, current imaging processes that include AI require large amounts of human resources to supervise modelling, prepare comparative radiology reports, and apply human-defined rules. The accuracy of AI-integrated imaging techniques currently relies on the large amount of time-consuming human work.

Research and novel developments from an engineering team at the University of Hong Kong has culminated in a new approach to AI medical imaging: Reviewing Free-Text Reports for Supervision (REFERS). This approach can cut human costs by 90%, by automatically drawing on the data from thousands of radiology reports at the same time. The prediction accuracy of the approach surpasses conventional medical image diagnosis employing AI algorithms.

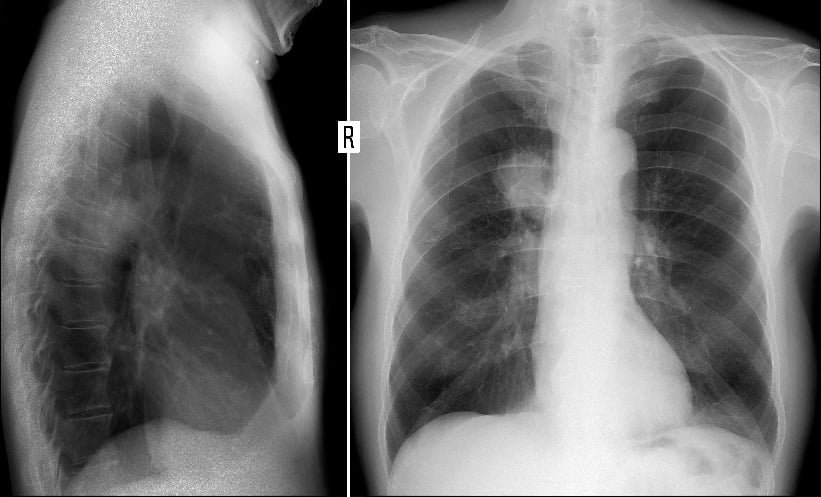

REFERS’ approach is based on two report-related tasks, report generation, and radiograph-report matching. REFERS translates radiographs into text reports and then measures the similarity between predicted and real reports. Contrastive learning is then used to align free text reports and radiographs. The system was trained using a public database of 370,000 X-ray images. The radiograph-recognition model was built using 10,000 radiographs, and exhibited a performance accuracy of 90.1%. Generally, an accuracy of above 85.0% in predictions makes an AI-imaging system useful for real-world clinical applications.

“With the appropriate training, REFERS directly learns radiograph representations from free-text reports without the need to involve manpower in labelling,” explained research team lead Yu Yizhou, Department of Computer Science, Faculty of Engineering, University of Hong Kong. “AI-enabled medical image diagnosis has the potential to support medical specialists in reducing their workload and improving the diagnostic efficiency and accuracy.”